Re-using a gaming GPU for LLaMa 2

November 02, 2023

Introduction

I’ve been experimenting with LLMs for a while now for various reasons.

Two of the most useful outcomes have been in text extraction of Maltese road names from unstructured addresses and for code generation of boilerplate scripts (the “write me a script to use GitHub’s API to rename all master branches to main” kind of script).

OpenAI

GPT3.5 Turbo is a great model and very easy to get started with, however with any sort of volume and with a large prompt, the costs begin to add up.

As a result, I began looking into self-hosting my own LLM to try and replace these tasks. Even if the response was slower at least it would be cheaper.

LLaMa

One of OpenAI’s biggest challengers is Meta with their LLaMa model. With the highly permissive licence of LLaMa 2 and the great work done in running these models locally (projects such as llama.cpp), it is now very easy to get started with self-hosting.

If however you have structured your project around OpenAI and their models, it would be much easier to somehow package these models in an API that mimics OpenAI’s API structure and responses. This makes it very easy to swap for a locally hosted model without having to change a lot of code.

Performance

One of OpenAI’s biggest benefits is the performance of the models. While some queries can take a large time to respond, in my experience most of them resolve within two seconds or less.

When self-hosting, especially if you are running on a CPU, performance leaves much to be desired. You can make improvements by using quantized models but you risk missing out on performance and cross-testing multiple quantized versions is a time-prohibitive one, especially for side projects.

Luckily, being a gamer, I have more GPUs than I need in my life and these models run much faster on GPUs.

This blog post covers the steps I went through to self-hosting LLaMa 2 on a gaming PC that I run headlessly in my home.

Steps

1: Put together the correct hardware

While this might seem like an obvious step, you should make sure the hardware you have is compatible with your aim. To keep this simple, the easiest way right now is to ensure you have an NVIDIA GPU with at least 6GB of VRAM that is CUDA compatible. This list can help.

In my case, the RTX 2060 has compute capability 7.5.

2: Install the OS

I installed Ubuntu 22.04 LTS. In general its a good idea to stick to the latest LTS image, it ends in less surprises and is more likely to have matured in terms of installation guides.

3: Install the required NVIDIA drivers/tools

I have used this guide from NVIDIA multiple times to the letter and have never regretted it.

You will also need to install the latest versions of docker, docker compose and the NVIDIA Container Runtime.

Note: This guide assumes you want to run things in a dockerised fashion. You can just as easily do it withuot docker and that’s a valid choice for many use cases. In my case, I followed this way as down the line I plan to run it in a Kubernetes cluster.

4: Confirm all is running well

After finishing installing the drivers, reboot. If the machine boots up normally, congratulations! It isn’t the first time that Ubuntu + NVIDIA has broken for me if I look at it funny.

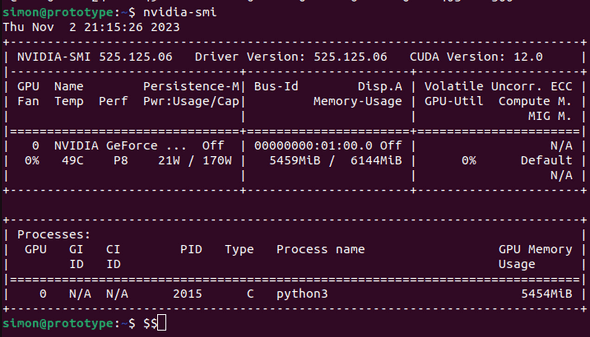

You can also run nvidia-smi to confirm that the GPU is recognised correctly. This is the output I get from mine:

5: Download the model from huggingface

Go to https://huggingface.co and find a GGUF version of LLaMa-2-7B-Chat.

Let’s break that down:

- huggingface is the premier website to find ML models

- GGUF is the format used by llama.cpp which is the library we will use to run the model

- LLaMa 2 and not 1 because the licencing of the first version was for research only

- 7B stands for the number of parameters, in this case 7 Billion. We’re opting for the smallest one to get things started

-Chatis the chat dialogue optimised model. You can get the non-chat model later to try it out and find the difference.

Here is a working link at time of writing.

6: Clone the repository for llama-cpp-python

Clone the following repository: abetlen/llama-cpp-python.

This will provide us with the API server.

7: Build the cuda_simple image

Go to docker/cuda_simple and build the Docker image using docker build -t cuda_simple .

Note: You must edit the CUDA_IMAGE argument at the top of this Dockerfile to match the version of CUDA on your machine.

8: Bring up the webserver

For my original usecase (an LLM capable of answering questions about Maltese Law), I used a docker compose file to bring up multiple services. Here is the minimal parts I needed to bring up just the LLM API:

version: '3.5'

services:

llama-api:

image: cuda_simple:latest

ports:

- 8000:8000

cap_add:

- SYS_RESOURCE

environment:

USE_MLOCK: 0

MODEL: /models/7B/llama-2-7b-chat.Q4_0.gguf

N_GPU_LAYERS: 35

MAX_TOKENS: 1024

CONTEXT_WINDOWS: 2048

volumes:

- /home/simon/models:/models

deploy:

resources:

reservations:

devices:

- driver: nvidia

count: 1

capabilities: [gpu]

restart: unless-stoppedBreaking it down:

llama-2-7b-chat.Q4_0.gguf: this is the filename of the 4 bit quantized model I downloaded from huggingface. This needs to match the filename that you downloaded. A less quantized (meaning 5 bit, 6 bit, 8 bit, etc) version will take more RAM to run but may perform better.N_GPU_LAYERS: this specifies the nubmer of layers to run on the GPU. Ideally you run all of them to get the best performance, however your VRAM is another limiting factor here./home/simon/models: this is the directory where I store models. The full path on the host in my case would be/home/simon/models/7B/llama-2-7b-chat.Q4_0.gguf.

To bring it up, you can do docker-compose up

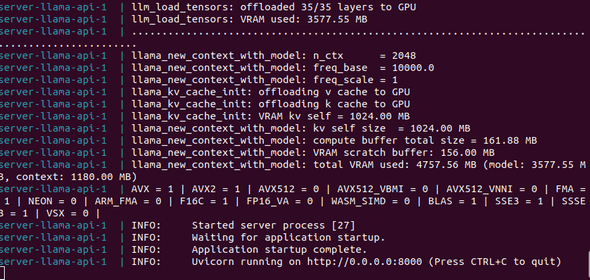

If all works as expected, you should see the following logs:

Testing

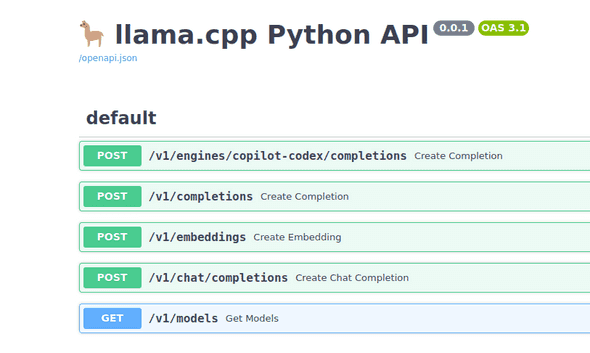

You should be able to access the API docs at <host_ip_address:8000/docs

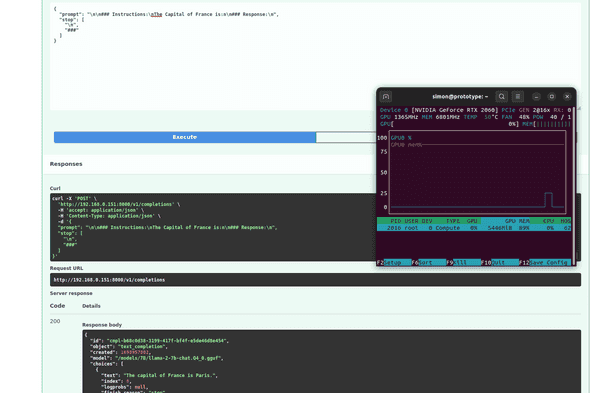

The docs will also let you try out a query using the Try it out button. Here you can see the response for "The capital of France is:" and the corresponding increase in GPU usage as the LLM processes a response.

Conclusion

The next steps for me are to try out the CodeLlama model, a model optimised to produce Python code and see if I can connect it easily with VS Code, providing a locally hosted version of something like GitHub Copilot without having to pay for the subscription cost.